Introduction

Fishing in World of Warcraft is a secondary profession, which can be very lucrative but on the other hand, is very time-consuming and boring. It allows adventurers to fish various objects, primarily fish and other water-bound creatures, from water, lava, and even liquid mercury. (Wowpedia)

The mechanics of the minigame are really simple:

- Learn the fishing skill from one of the fishing trainers.

- Equip a fishing pole.

- Find a body of water.

- Cast fishing.

- Wait for the catch.

- Click on the bobber.

- Loot the fish.

- Go to step 4.

In this article, I’m assuming that the character has already learned the skill, has the proper equipment, and is facing a body of water.

Overview

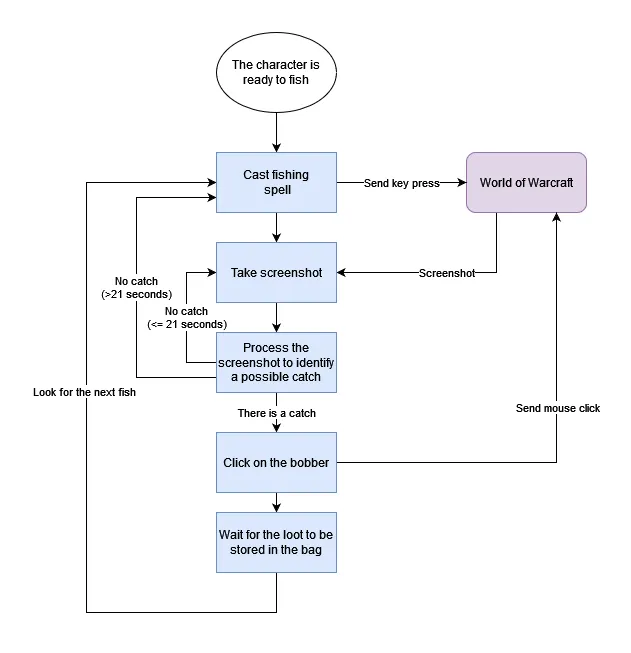

To solve steps 4-8, I came up with the following flowchart:

The flow starts by casting the fishing spell, which can be achieved by sending a key press event to the main game window. In order to simplify things, we’ll assume that the cast is always successful for now.

In the next state, we need to observe the state of the appearing bobber by taking and analyzing screenshots.

If there is no catch in the screenshot, we take another one, otherwise, we calculate the bounding box of the bobber and send a mouse click event to the main game window.

Clicking the bobber will trigger the auto-loot mechanism which stores the fish in the bag automatically and another fishing spell can be cast.

There is always a possibility that something goes wrong (e.g another character obfuscates the view or the fishing spell cast fails). If there was no catch in the past 21 seconds, then the flow can be restarted by casting fishing again.

What’s the catch?

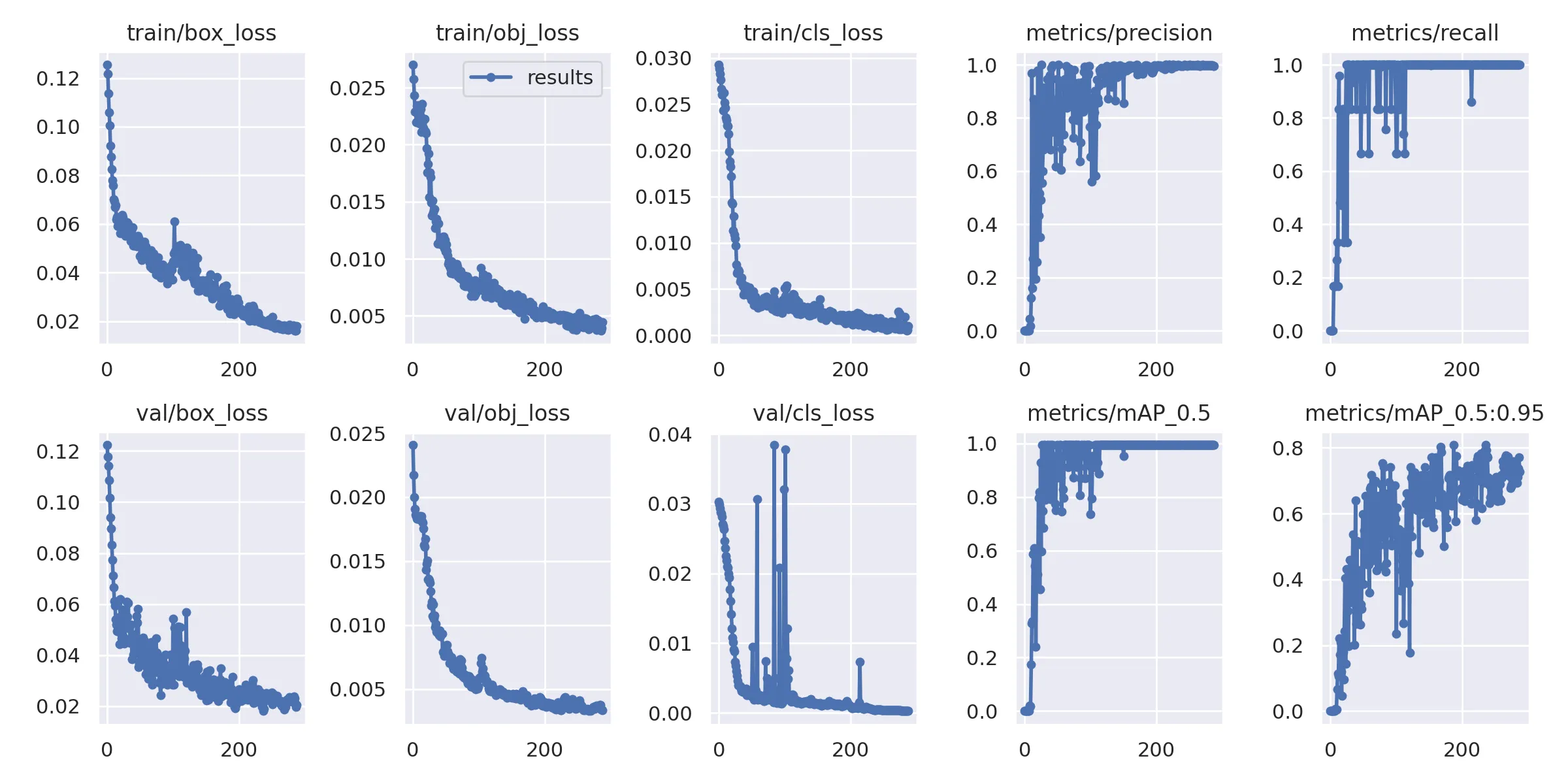

The implementation of the core logic with libraries such as pyautogui, is straightforward. However, identifying a catch is not that simple, and we need to have a function that takes a screenshot as the input and returns whether it contains a catch or not. For this task, I used YOLOv5 and followed their tutorial on training custom data.

Datasets

I created two datasets with the principle that the same location (body of water) cannot appear in both of them. I manually annotated these images with LabelImg. The dataset contains images for both bobbers and splashes with different water colors, lighting conditions, and so on.

The configuration for the 2 classes are as follows:

path: data/fishnet

train: train

val: val

nc: 2

names: ["bobber", "splash"]Train dataset

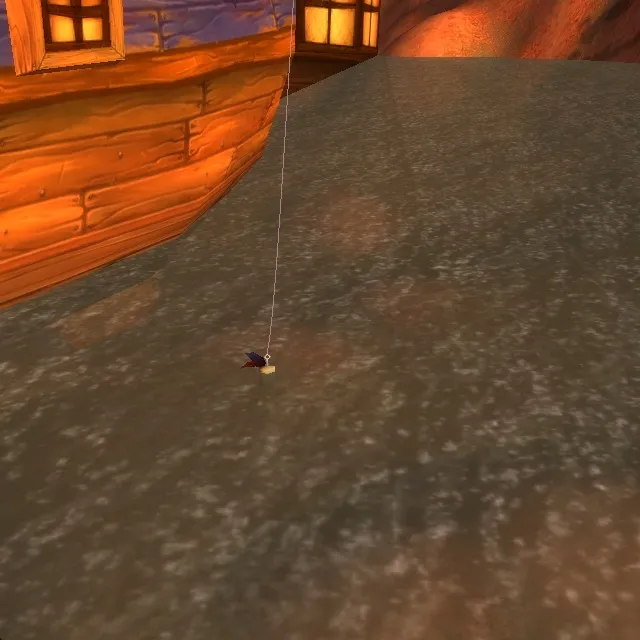

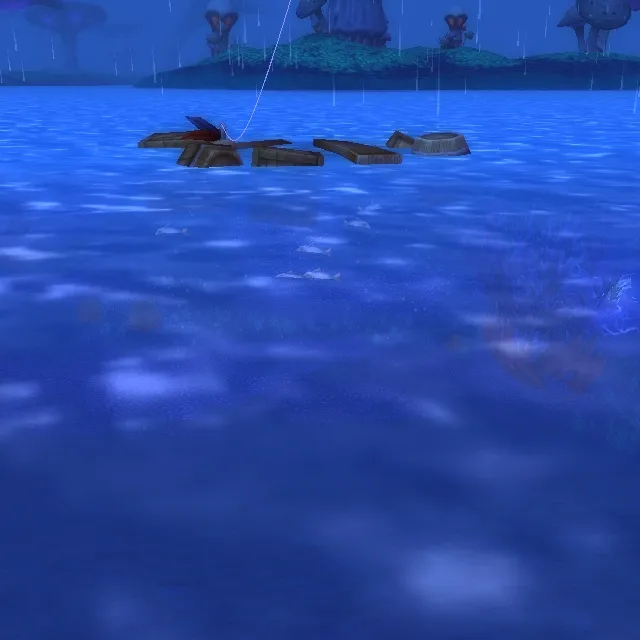

I started gathering training data while leveling fishing with my character in low-level zones. There are 140 screenshots in the dataset, some examples are:

Durotar

Ratchet

Stonetalon Mountains

Zangarmarsh

Validation dataset

The validation dataset contains screenshots of bobbers and splashes taken in the capitals. There are 24 screenshots in the dataset, some examples taken in Orgrimmar:

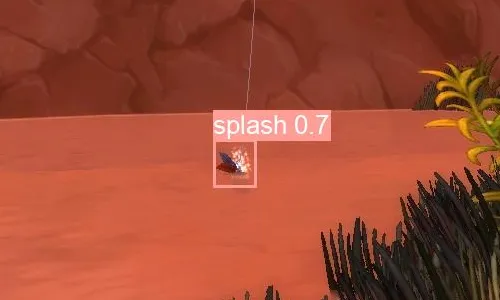

Example model outputs:

Conclusion

The resulting model is capable of identifying bounding boxes in real-time with good precision and recall, even with a very small train dataset.

The model would make it possible to implement an automated way of fishing in World of Warcraft, which I do not endorse or recommend, as it can lead to account suspension. Please use this article only for educational purposes.