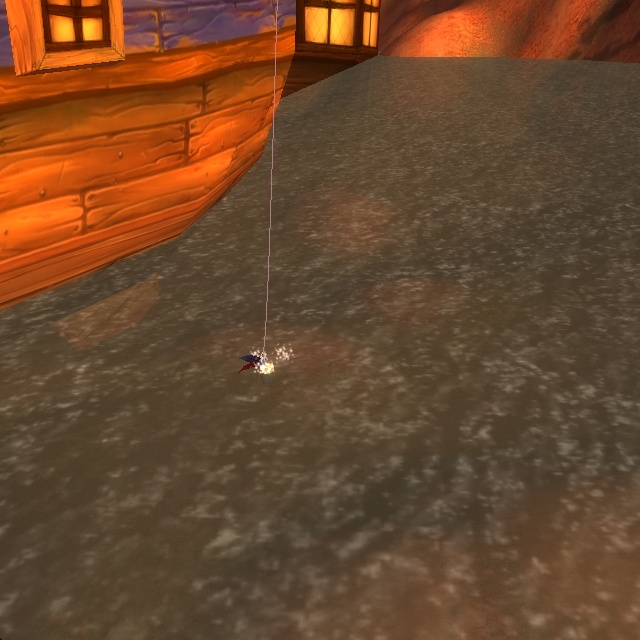

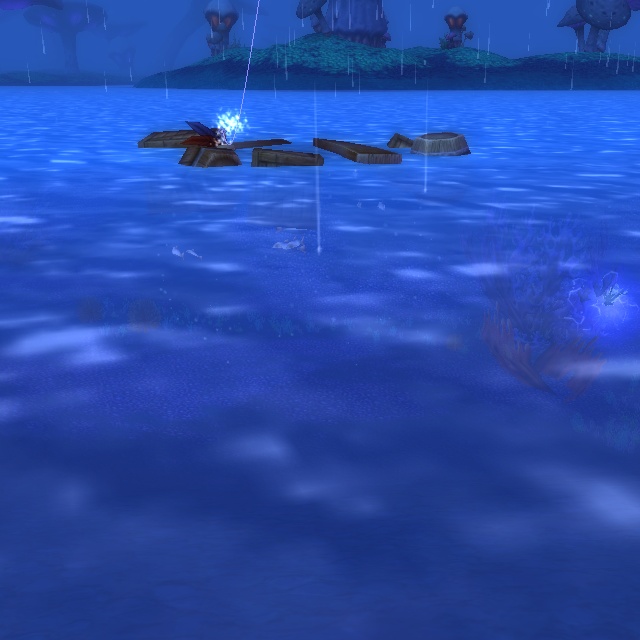

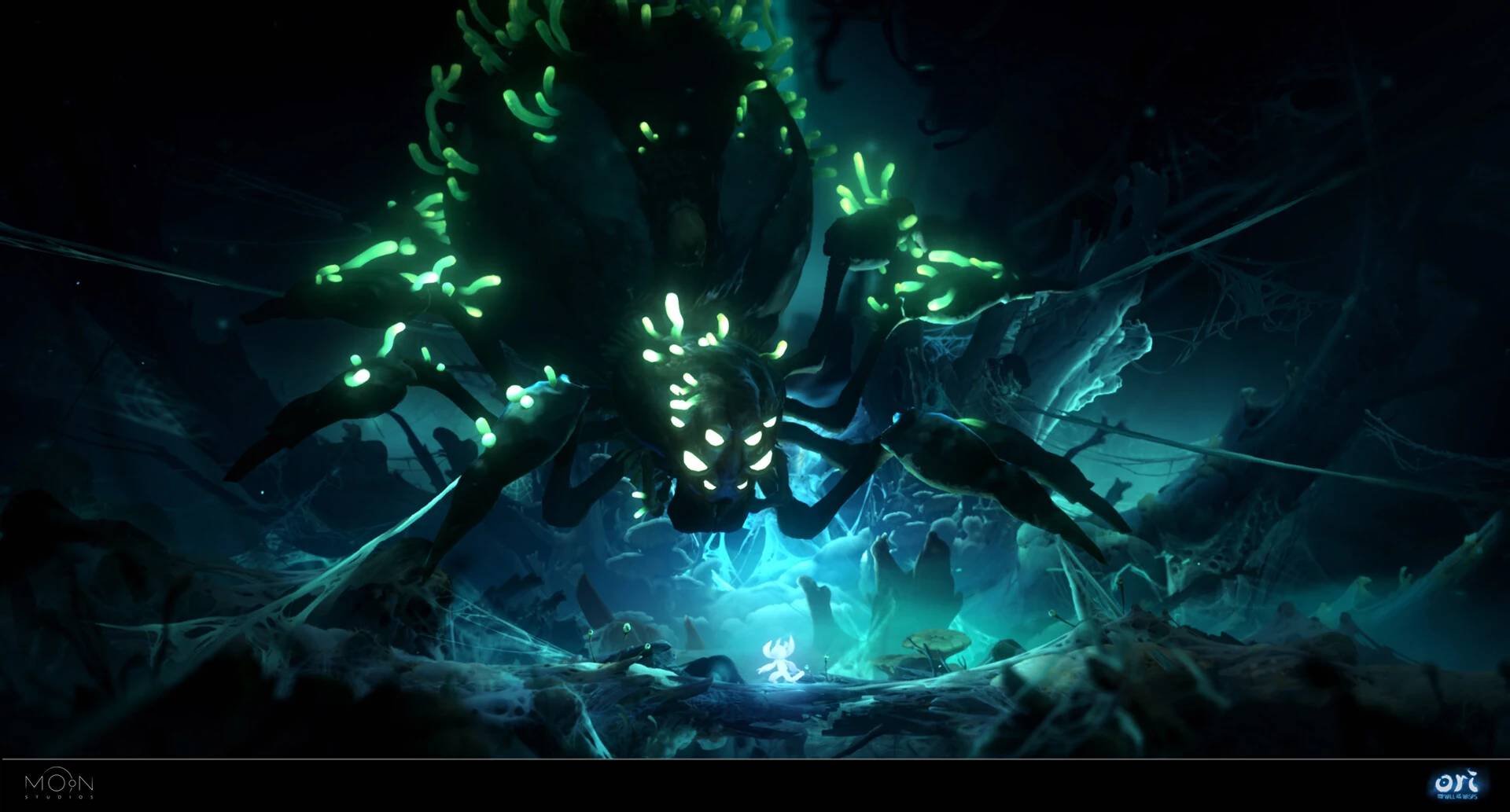

Ori and the Will of the Wisps is a platform-adventure video game. In this particular game, unlike the previous version, you cannot change the level of difficulty once you have started playing. The only way to adjust the difficulty is by starting the game again from the very beginning, which means you would lose all the progress you have made so far.

Having already spent a good 10 hours exploring the areas and fighting the different bosses, I found myself face to face with my nemesis, Mora, the giant spider. I came across multiple complaints online regarding this fight, and I even looked up guides on how to defeat this boss, but unfortunately, none of them were helpful. The only realistic way for me to proceed was to set the difficulty to easy.

Instead of starting the game all over again, I wanted to modify my existing save file - unfortunately, I couldn’t find any available editors online having this feature, therefore I decided to create my custom editor for modifying the difficulty.

Risks

Before starting the implementation, I’ve identified the following risks, which would mean that implementing the difficulty changer would be infeasible, and simply restarting the game would be a lot more efficient.

- The save files are encrypted, compressed or obfuscated.

- The difficulty variable is protected by a hash or a checksum.

- The implementation takes more than 10 hours.

Analysis

I began my analysis by starting a new game on all 3 difficulties. I collected the resulting save files from C:\Users\<user>\AppData\Local\Ori and the Will of The Wisps, and then implemented a heuristic solution for finding the offset where the difficulty is stored.

I assumed that the difficulty must be an enum, which has consecutive numeric values, and this value increases as the difficulty increase, such as:

class Difficulty(enum.Enum):

Easy = 0

Medium = 1

Hard = 2

I implemented the following function, which takes the 3 save files that were previously collected, and compares them byte-by-byte. If there is an index such that the value in the easy file is exactly one less than the value in the medium file, which is exactly one less than the value in the hard file, we get an offset matching our assumption.

def get_possible_difficulty_offsets(

easy_bytes: bytes, medium_bytes: bytes, hard_bytes: bytes

) -> list[int]:

data = zip(easy_bytes, medium_bytes, hard_bytes)

return [

i

for i, (easy_byte, medium_byte, hard_byte) in enumerate(data)

if hard_byte - medium_byte == medium_byte - easy_byte == 1

]

The function returns three offsets: 0x145F, 0x1463 and 0x279C.

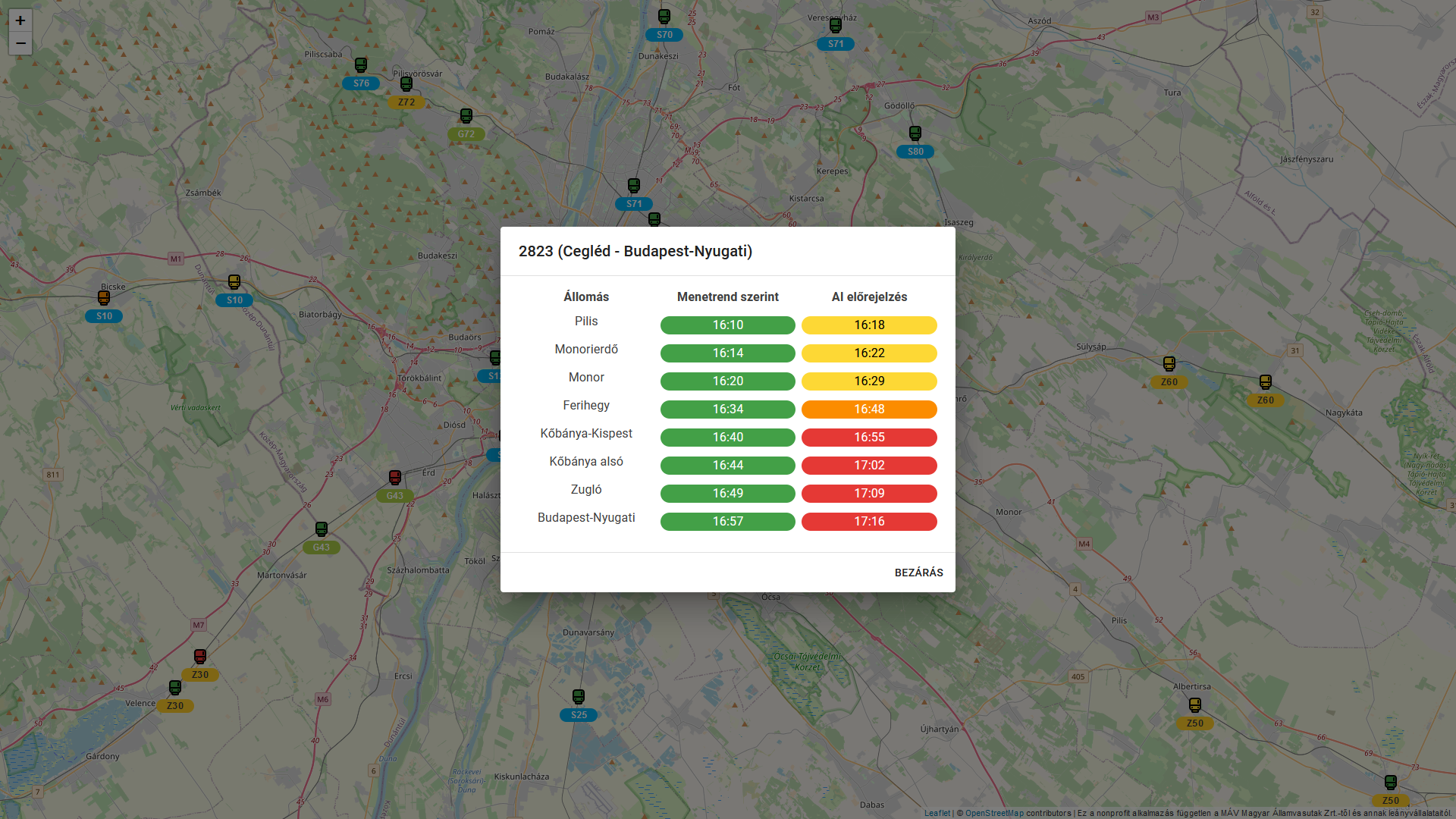

| Save file difficulty | Value at 0x145F | Value at 0x1463 | Value at 0x279C |

|---|---|---|---|

| Easy | 0 | 0 | 0 |

| Medium | 1 | 1 | 1 |

| Hard | 2 | 2 | 2 |

By looking at the values, we can conclude that they all match the assumption, and they seem to be a good indicator of the difficulty to which the files belong.

Getting the difficulty of a save file

The difficulty of a save file can be determined by obtaining the values at the previously determined offsets and comparing them to the difficulty enum, such as:

def maybe_get_difficulty(

save_bytes: bytes, offsets: list[int] | None = None

) -> Difficulty | None:

if offsets is None:

offsets = DEFAULT_DIFFICULTY_OFFSETS

values = [save_bytes[offset] for offset in offsets]

for difficulty in Difficulty:

if all(x == difficulty.game_value for x in values):

return difficulty

return None

Setting the difficulty of a save file

Similarly, we can modify the byte values at the previously determined offsets:

def change_difficulty(

save_bytes: bytes, difficulty: Difficulty, offsets: list[int] | None = None

) -> bytes:

if offsets is None:

offsets = DEFAULT_DIFFICULTY_OFFSETS

save_bytes_array = bytearray(save_bytes)

for offset in offsets:

save_bytes_array[offset] = difficulty.game_value

return bytes(save_bytes_array)

CLI

I also created a CLI (it’s available on GitHub), which is capable of changing the difficulty in save files. The targeted platform is Windows, but it should work on all major operating systems.

Available functionality

Get difficulty of a save file

> poetry run py main.py get-difficulty --path "C:\Users\<user>\AppData\Local\Ori and the Will of The Wisps\saveFile0.uberstate"

Difficulty: Medium

Set difficulty of a save file

> poetry run py main.py set-difficulty --path "C:\Users\<user>\AppData\Local\Ori and the Will of The Wisps\saveFile0.uberstate" --difficulty easy

Is the current difficulty 'medium'? [y/N]: y

Is the desired difficulty 'easy'? [y/N]: y

Creating backup C:\Users\<user>\AppData\Local\Ori and the Will of The Wisps\saveFile0.uberstate.bak.1687803860

Patching file C:\Users\<user>\AppData\Local\Ori and the Will of The Wisps\saveFile0.uberstate

Done

Re-calculate offsets

> poetry run py main.py find-difficulty-offsets --easy-path "./tests/save_files/saveFile_easy.uberstate" --medium-path "./tests/save_files/saveFile_medium.uberstate" --hard-path "./tests/save_files/saveFile_hard.uberstate"

Found possible difficulty offset: 0x145F

Found possible difficulty offset: 0x1463

Found possible difficulty offset: 0x279C